Slides from 2020 conference that eventually became this paper

Slides from 2020 conference that eventually became this paper

Slides located here.

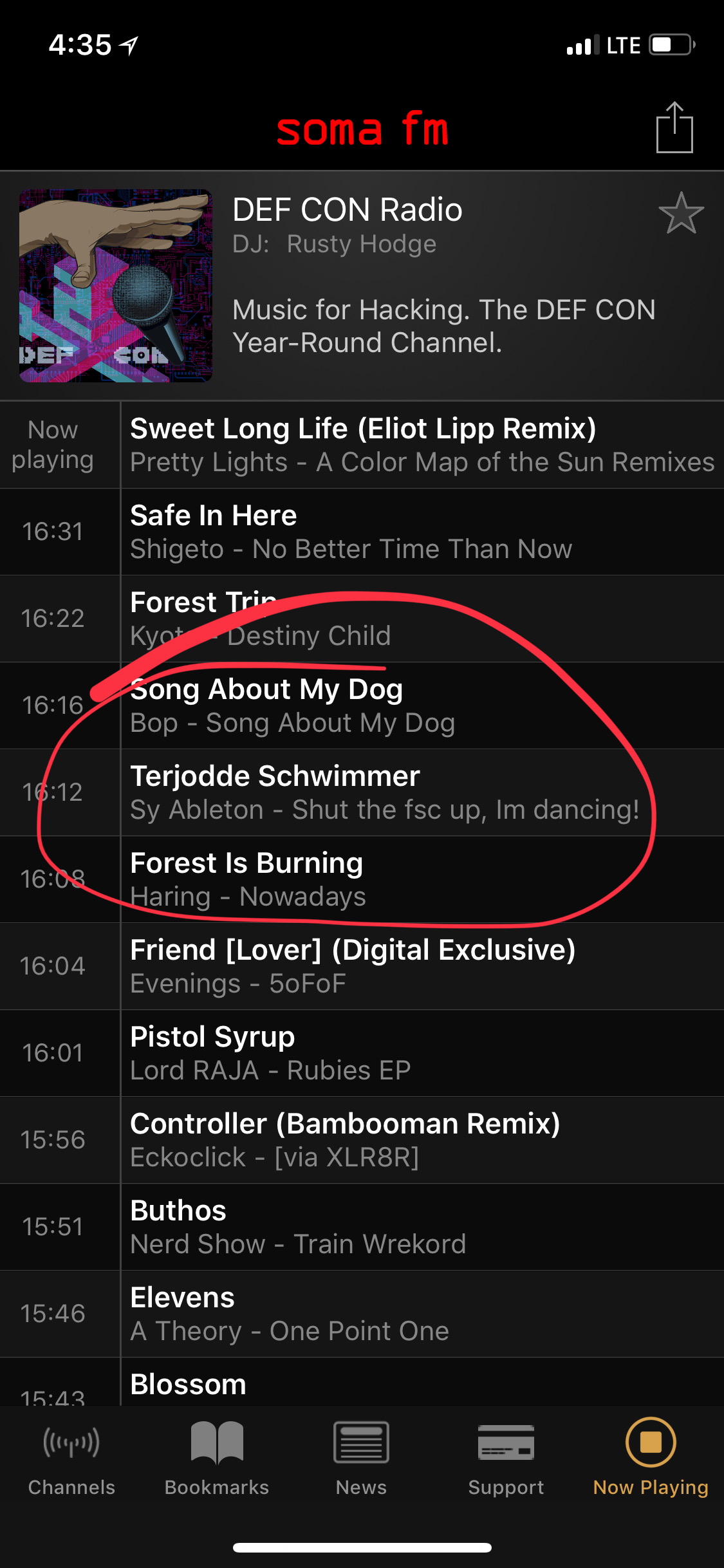

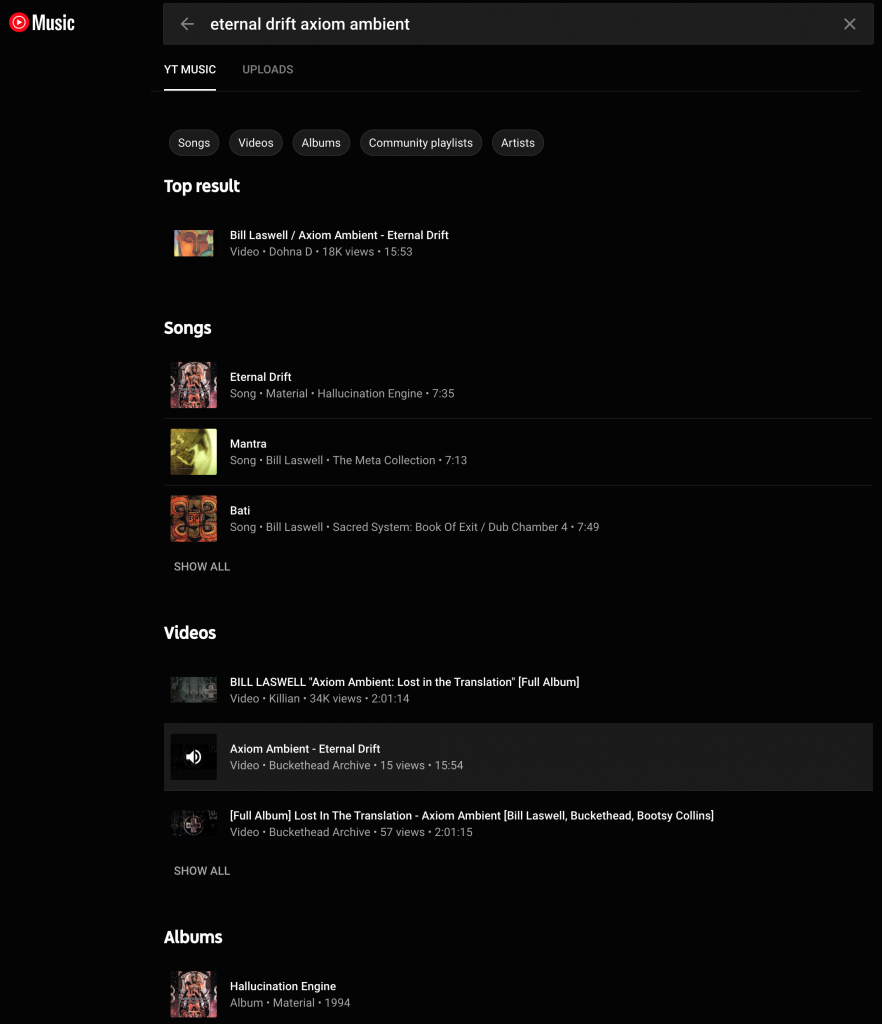

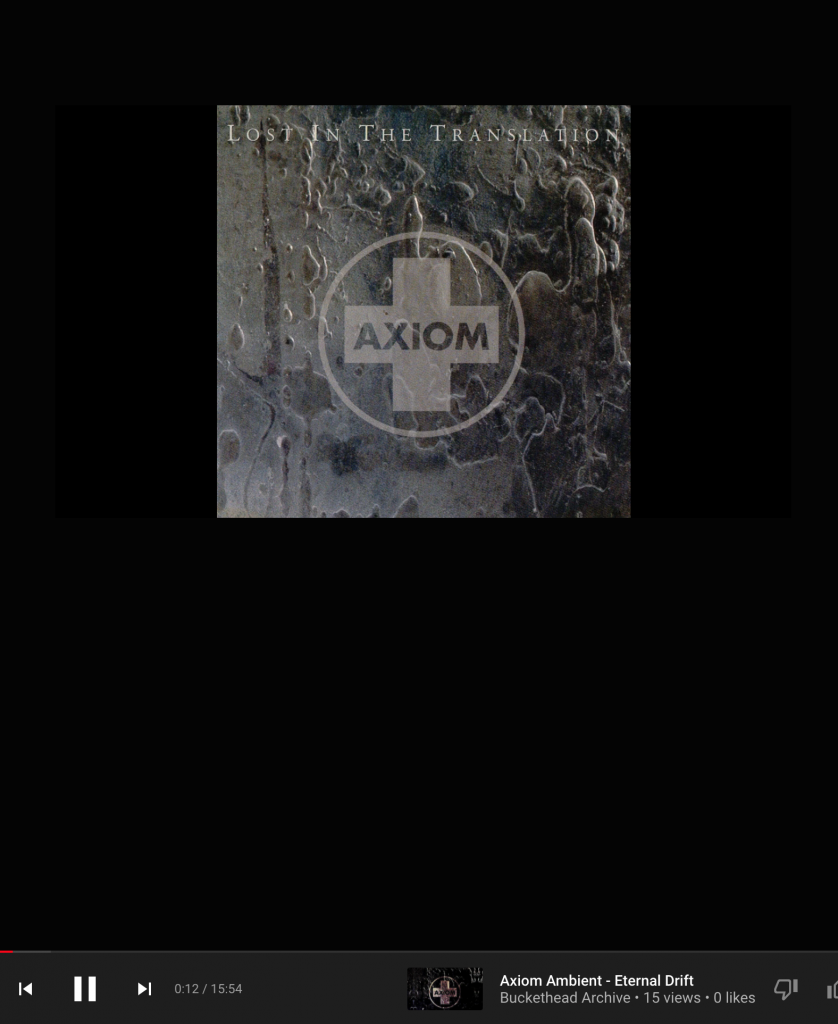

I love music so much that I subscribe to not 0, and not even 1, but 2 streaming music services: YouTube Music (blech, what a name) and Apple Music (hardly better).

Why would I do such a thing?

Here are some notable features of the 1994 Axiom Ambient album, an incredible victory over copyright limitations in its pleasing combination of diverse talents and tools:

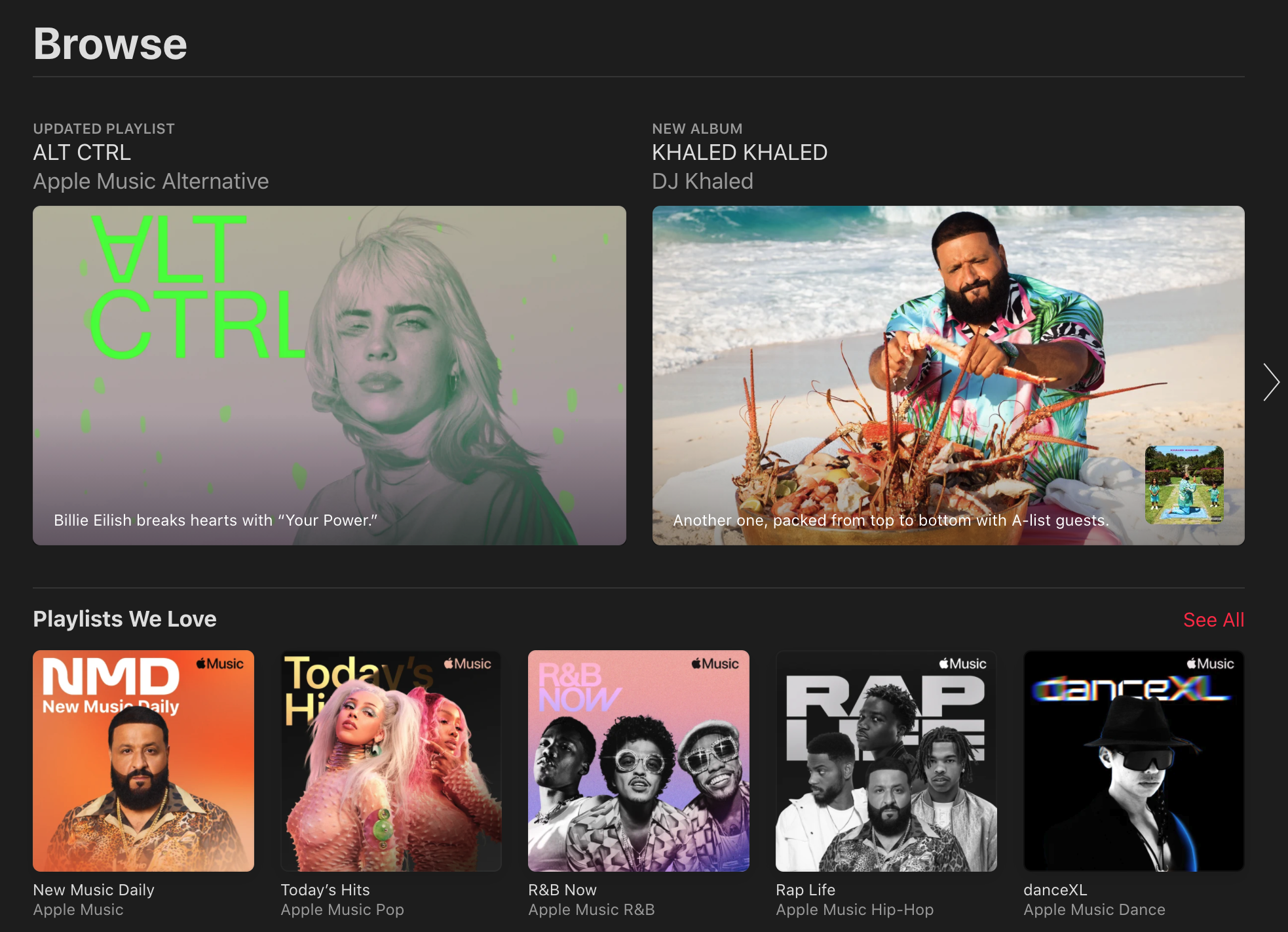

This, and I have a wife with better musical taste than me who is willing to explain to me how I can listen to her playlists on the vertically integrated, corporate pimping, payola lined hellscape that is Apple music.

There are three more Khaleds and three more “Khaled”s, and one more Eilish on this landing page than I want despite being logged in to FruitTunes:

Suppose there is a future for non-corporate musicians in large numbers making living wages by streaming music (doubtful). Maybe, and this is a big stretch, one of the ways I can help get there is by spending money on two services, so Bill Laswell can get a few cents towards his month to month (he could be loaded, I have no idea).

Like I said: doubtful.

This is a draft from Sep. 5, 2015. Links may be broken.

csv voting records are available at http://www.parl.gc.ca/Parliamentarians/en/members by clicking on the member and then on “Work” and then “All votes by this Member.” You can see the link I used for Megan Leslie at http://www.parl.gc.ca/Parliamentarians/en/members/Megan-Anissa-Leslie(58550)/Votes, in the Export: CSV link. That file was renamed Leslie41parl2session.csv.

regan41parl2session <- read.csv("Regan41parl2session.csv", header = TRUE)

leslie41parl2session <- read.csv("Leslie41parl2session.csv", header = TRUE)

harper41parl2session <- read.csv("Harper41parl2session.csv", header = TRUE)

leslieSmall <- data.frame(leslie41parl2session$Vote.Number, leslie41parl2session$Member.s.Vote)

reganSmall <- data.frame(regan41parl2session$Vote.Number, regan41parl2session$Member.s.Vote)

harperSmall <- data.frame(harper41parl2session$Vote.Number, harper41parl2session$Member.s.Vote)

names(leslieSmall)[1] <- "Vote.Number"

names(reganSmall)[1] <- "Vote.Number"

names(harperSmall)[1] <- "Vote.Number"

smallMerge <- merge(leslieSmall, reganSmall, by="Vote.Number")

smallMerge <- merge(smallMerge, harperSmall, by="Vote.Number")smallMerge now contains all of Leslie, Regan, and Harper’s votes for the most recent Parliament – that is, all the ones in which all three voted. Let’s take a look at the ones in which Leslie and Regan voted differently, but Regan voted with Harper.

L_Diff_R_H_Same <- smallMerge$Vote.Number[smallMerge$leslie41parl2session.Member.s.Vote !=

smallMerge$regan41parl2session.Member.s.Vote

& smallMerge$harper41parl2session.Member.s.Vote ==

smallMerge$regan41parl2session.Member.s.Vote]

L_Diff_R_H_Same## [1] 11 71 93 146 147 170 173 174 180 185 190 201 203 240 242 277 279

## [18] 281 282 291 313 314 319 367 456 461 464It’s a bit more readable to get the subject headings for those votes; note that the indices reported below are local to the vector. The subjects correspond, in order, to the vote numbers above.

leslie41parl2session[leslie41parl2session$Vote.Number %in% L_Diff_R_H_Same,

6]## [1] Opposition Motion (Keystone XL pipeline)

## [2] 2nd reading of Bill C-504, An Act to amend the Canada Labour Code (volunteer firefighters)

## [3] 2nd reading of Bill C-20, An Act to implement the Free Trade Agreement between Canada and the Republic of Honduras, the Agreement on Environmental Cooperation between Canada and the Republic of Honduras and the Agreement on Labour Cooperation between Canada and the Republic of Honduras

## [4] Government Business No. 10 (Extension of sitting hours and conduct of extended proceedings) (amendment)

## [5] Government Business No. 10 (Extension of sitting hours and conduct of extended proceedings)

## [6] Bill C-31, An Act to implement certain provisions of the budget tabled in Parliament on February 11, 2014 and other measures (report stage amendment)

## [7] Bill C-31, An Act to implement certain provisions of the budget tabled in Parliament on February 11, 2014 and other measures (report stage amendment)

## [8] Bill C-31, An Act to implement certain provisions of the budget tabled in Parliament on February 11, 2014 and other measures (report stage amendment)

## [9] Bill C-31, An Act to implement certain provisions of the budget tabled in Parliament on February 11, 2014 and other measures (report stage amendment)

## [10] Bill C-31, An Act to implement certain provisions of the budget tabled in Parliament on February 11, 2014 and other measures (report stage amendment)

## [11] Concurrence in an opposed item

## [12] 3rd reading and adoption of Bill C-20, An Act to implement the Free Trade Agreement between Canada and the Republic of Honduras, the Agreement on Environmental Cooperation between Canada and the Republic of Honduras and the Agreement on Labour Cooperation between Canada and the Republic of Honduras

## [13] Motion to adjourn the House

## [14] Bill C-13, An Act to amend the Criminal Code, the Canada Evidence Act, the Competition Act and the Mutual Legal Assistance in Criminal Matters Act (report stage amendment)

## [15] Concurrence at report stage of Bill C-13, An Act to amend the Criminal Code, the Canada Evidence Act, the Competition Act and the Mutual Legal Assistance in Criminal Matters Act

## [16] Bill C-18, An Act to amend certain Acts relating to agriculture and agri-food (report stage amendment)

## [17] Concurrence at report stage of Bill C-18, An Act to amend certain Acts relating to agriculture and agri-food

## [18] First Report of the Standing Committee on Agriculture and Agri-Food (amendment)

## [19] First Report of the Standing Committee on Agriculture and Agri-Food

## [20] Opposition Motion (Proportional representation)

## [21] Bill C-44, An Act to amend the Canadian Security Intelligence Service Act and other Acts (report stage amendment)

## [22] Concurrence at report stage of Bill C-44, An Act to amend the Canadian Security Intelligence Service Act and other Acts

## [23] 3rd reading and adoption of Bill C-44, An Act to amend the Canadian Security Intelligence Service Act and other Acts

## [24] Government Business No. 17 (Military contribution against ISIL) (amendment)

## [25] 3rd reading and adoption of Bill S-7, An Act to amend the Immigration and Refugee Protection Act, the Civil Marriage Act and the Criminal Code and to make consequential amendments to other Acts

## [26] Ways and Means motion No. 25

## [27] Motion to concur in the 21st Report of the Standing Committee on Procedure and House Affairs (motion M-489, Election of the Speaker)

## 322 Levels: [number \x96 one to ten in letters, others in numbers with st,rd,th] Report of the [Committee Name] ...The slides for my talk “The Return of Mind Design: Cognitive Science and the Turing/Ashby Debate”, with Erik Nelson, June 1, 2019 at the University of British Columbia are available here.

Slides located here.

Before you vote on Monday, please find out what your preferred candidate for Ward 13 will do about affordable housing. I believe it can’t be solved any time soon if we don’t take strong action against reseller websites.

A vote for Darren Abramson means a vote for Toronto city action that goes at least as far as Vancouver’s actions.

What will you do about Airbnb taking units from residents and driving up rent?